Kubernetes集群统一日志管理方案(Elasticsearch+Filebeat+Kibana+Metricbeat)搭建教程

写在前面

学习,所以整理分享给小伙伴

这里要说明的是:这一套方案太吃硬件了,需要高配的本才能跑起来

我的,集群用三个虚机部署的,工作节点都是的配置

折腾了两天没有跑起来,后来放弃了,查了下,还是资源的问题。浪费了两天的假期:(

然后睡了一觉,起来好了,勉强能跑起来,但是巨卡

博文涉及主要内容集群方式日志管理方案()搭建一些搭建过程的避坑说明

部分内容參考

我所渴求的,無非是將心中脫穎語出的本性付諸生活,為何竟如此艱難呢 ------赫尔曼·黑塞《德米安》

方案简述

部署完平台后,会安装运行大量的应用, 及各种中会产生大量的各种各样的和,通过。下面和小伙伴聊聊日志采集、传输、存储及分析的具体方案及实践。

Kubernetes平台上的按照分为和.

主要是指执行过程中产生的

是指的所产生的。一般容器内的会通过输出到,

可以通过来接收和重定向这些。

在容器中输出到控制台的日志,都会以的命名方式保存在目录下

┌──[root@vms81.liruilongs.github.io]-[/]

└─$cd /var/lib/docker/containers/

┌──[root@vms81.liruilongs.github.io]-[/var/lib/docker/containers]

└─$ls

0606dd217f4f2f315f86fb378ffa65ed0c59ba7580869b9a88634dd6e171fdc0 5fde81847fefef953a4575a98e850ce59e1f12c870c9f3e0707a993b8fbecdf0 a72407dce4ac3cd294f8cd39e3fe9b882cbab98d97ffcfad5595f85fb15fec86

。。。。。。。。。。。。。。。。。。。。

a66a7eee79ced84e6d9201ee38f9ab887f7bfc0103e1172e107b337108a63638 ff545c3a08264a28968800fe0fb2bbd5f4381029f089e9098c9e1484d310fcc1

┌──[root@vms81.liruilongs.github.io]-[/var/lib/docker/containers]

└─$cd 0606dd217f4f2f315f86fb378ffa65ed0c59ba7580869b9a88634dd6e171fdc0;ls

0606dd217f4f2f315f86fb378ffa65ed0c59ba7580869b9a88634dd6e171fdc0-json.log checkpoints config.v2.json hostconfig.json mounts

┌──[root@vms81.liruilongs.github.io]-[/var/lib/docker/containers/0606dd217f4f2f315f86fb378ffa65ed0c59ba7580869b9a88634dd6e171fdc0]

└─$

对于这种数据量比较大的数据可以考虑通过网络直接重定向到收集器,不必通过文件中转,这样可以。对于的收集,由于,因此日志中能够包含日志来源的拓扑信息和IP地址等身份信息就显得尤为重要。应用日志由于数据量巨大,般应当根据系统确定保存时间,并对存储空间溢出的异常进行保护。

在集群环境中,一个完整的应用或服务都会涉及为数众多的组件运行,各组件所在的Node及实例数量都是可变的。,因此有必要在集群层面对日志进行统一收集和检索等工作。所以就有了和

ELK

ELK 是的简称,是容器日志管理的核心套件。

ElasticSearch: 是基于Apache Lucene引擎开发的实时全文搜索和分析引擎,提供结构化数据的搜集、分析、存储数据三大功能。它提供Rest和Java API两种接口方式。

Logstash: 是一个日志搜集处理框架,也是日志透传和过滤的工具,它支持多种类型的日志,包括系统日志、错误日志和自定义应用程序日志。它可以从许多来源接收日志,这些来源包括syslog、消息传递(例如RabbitMQ)和JMX,它能够以多种方式输出数据,包括电子邮件、Websockets和ElasticSearch

Kibana: 是一个图形化的Web应用,它通过调用ElastieSearch提供的Rest或Java API接口搜索存储在ElasticSearch中的日志,并围绕分析主题进行分析得出结果,并将这些结果数据按照需要进行界面可视化展示;另外, Kibana可以定制仪表盘视图,展示不同的信息以便满足不同的需求。

EFK

EFK: Logstas性能低,消耗资源,且存在不支持消息队列缓存及存在数据丢失的问题,所以logstash一般可以用或者替代

关于日志方案涉及的组件,这里列出官网地址,小伙伴可以去看看

在K8s集群部署统一的日志管理系统,需要以下两个前提条件。

正确配置了证书。

服务,启动、运行。

需要注意的是,这套方案涉及到RBAC权限处理的一些资源对象,要结合helm中chart的资源文件和当前集群的资源对象版本去修改。关于基于RBAC的权限处理在中引入,在时升级为,在时升级为,我们这里搭建用的,,但是集群是的版本,所以需要修改资源文件。

┌──[root@vms81.liruilongs.github.io]-[~/efk]

└─$kubectl api-versions | grep rbac

rbac.authorization.k8s.io/v1

┌──[root@vms81.liruilongs.github.io]-[~/efk]

└─$

架构简述

关于搭建的架构。我们这里简单描述下,其实和我之前讲的k8s集群监控类似,下面为我们搭建好的相关的列表,我们可以看到,集群作为日志存储平台,向上对接(是一个Web应用,用于调用接口查询的数据),向下对接,,这两个组件主要用于,采集完日志给

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create/metricbeat]

└─$kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE

NOMINATED NODE READINESS GATES

....

elasticsearch-master-0 1/1 Running 2 (3h21m ago) 3h55m 10.244.70.40 vms83.liruilongs.github.io

elasticsearch-master-1 1/1 Running 2 (3h21m ago) 3h55m 10.244.171.163 vms82.liruilongs.github.io

filebeat-filebeat-64f5b 1/1 Running 7 (168m ago) 3h54m 10.244.70.36 vms83.liruilongs.github.io

filebeat-filebeat-g4cw8 1/1 Running 8 (168m ago) 3h54m 10.244.171.185 vms82.liruilongs.github.io

kibana-kibana-f88767f86-vqnms 1/1 Running 8 (167m ago) 3h57m 10.244.171.159 vms82.liruilongs.github.io

........

metricbeat-kube-state-metrics-75c5fc65d9-86fvh 1/1 Running 0 13m 10.244.70.43 vms83.liruilongs.github.io

metricbeat-metricbeat-895qz 1/1 Running 0 13m 10.244.171.172 vms82.liruilongs.github.io

metricbeat-metricbeat-metrics-7c5cd7d77f-22fgr 1/1 Running 0 13m 10.244.70.45 vms83.liruilongs.github.io

metricbeat-metricbeat-n2gx2 1/1 Running 0 13m 10.244.70.46 vms83.liruilongs.github.io

.........

搭建环境

helm版本

┌──[root@vms81.liruilongs.github.io]-[/var/lib/docker/containers/0606dd217f4f2f315f86fb378ffa65ed0c59ba7580869b9a88634dd6e171fdc0]

└─$helm version

version.BuildInfo{Version:"v3.2.1", GitCommit:"fe51cd1e31e6a202cba7dead9552a6d418ded79a", GitTreeState:"clean", GoVersion:"go1.13.10"}

k8s集群版本

┌──[root@vms81.liruilongs.github.io]-[/var/lib/docker/containers/0606dd217f4f2f315f86fb378ffa65ed0c59ba7580869b9a88634dd6e171fdc0]

└─$kubectl get nodes

NAME STATUS ROLES AGE VERSION

vms81.liruilongs.github.io Ready control-plane,master 54d v1.22.2

vms82.liruilongs.github.io Ready 54d v1.22.2

vms83.liruilongs.github.io Ready 54d v1.22.2 EFK(helm)源设置

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create]

└─$helm repo add elastic https://helm.elastic.co

"elastic" has been added to your repositories

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create]

└─$helm repo list

NAME URL

azure http://mirror.azure.cn/kubernetes/charts/

ali https://apphub.aliyuncs.com

liruilong_repo http://192.168.26.83:8080/charts

stable https://charts.helm.sh/stable

prometheus-community https://prometheus-community.github.io/helm-charts

elastic https://helm.elastic.co

安装的版本为: --version=7.9.1

EFK chart包下载

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create]

└─$helm pull elastic/elasticsearch --version=7.9.1

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create]

└─$helm pull elastic/filebeat --version=7.9.1

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create]

└─$helm pull elastic/metricbeat --version=7.9.1

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create]

└─$helm pull elastic/kibana --version=7.9.1

查看chart下载列表,下载好之后直接通过tar zxf 解压即可

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create]

└─$ls *7.9.1*

elasticsearch-7.9.1.tgz filebeat-7.9.1.tgz kibana-7.9.1.tgz metricbeat-7.9.1.tgz

涉及到的相关镜像导入

需要注意的是,这里镜像下载特别费时间,最好提前下载一下,下面是我在所以工作节点导入的命令,要注意所以的节点都需要导入

┌──[root@vms82.liruilongs.github.io]-[/]

└─$docker load -i elastic7.9.1.tar

....

Loaded image: docker.elastic.co/elasticsearch/elasticsearch:7.9.1

┌──[root@vms82.liruilongs.github.io]-[/]

└─$docker load -i filebeat7.9.1.tar

....

Loaded image: docker.elastic.co/beats/filebeat:7.9.1

┌──[root@vms82.liruilongs.github.io]-[/]

└─$docker load -i kibana7.9.1.tar

....

Loaded image: docker.elastic.co/kibana/kibana:7.9.1

┌──[root@vms82.liruilongs.github.io]-[/]

└─$docker load -i metricbeat7.9.1.tar

.....

Loaded image: docker.elastic.co/beats/metricbeat:7.9.1

┌──[root@vms82.liruilongs.github.io]-[/]

└─$

EFK heml安装

这里需要注意的是,我们还需要修改一些配置文件,根据集群的情况

elasticsearch安装

es集群参数的修改

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create]

└─$cd elasticsearch/

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create/elasticsearch]

└─$ls

Chart.yaml examples Makefile README.md templates values.yaml

修改集群副本数为2,我们只有2个工作节点,所以只能部署两个

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create/elasticsearch]

└─$cat values.yaml | grep repli

replicas: 3

# # Add a template to adjust number of shards/replicas

# curl -XPUT "$ES_URL/_template/$TEMPLATE_NAME" -H 'Content-Type: application/json' -d'{"index_patterns":['\""$INDEX_PATTERN"\"'],"settings":{"number_of_shards":'$SHARD_COUNT',"number_of_replicas":'$REPLICA_COUNT'}}'

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create/elasticsearch]

└─$sed 's#replicas: 3#replicas: 2#g' values.yaml | grep replicas:

replicas: 2

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create/elasticsearch]

└─$sed -i 's#replicas: 3#replicas: 2#g' values.yaml

修改集群最小master节点数为1

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create/elasticsearch]

└─$cat values.yaml | grep mini

minimumMasterNodes: 2

## ref: https://kubernetes.io/docs/tasks/administer-cluster/configure-multiple-schedulers/

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create/elasticsearch]

└─$sed -i 's#minimumMasterNodes: 2#minimumMasterNodes: 1#g' values.yaml

修改数据持续化方式,这里修改不持久化

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create/elasticsearch]

└─$cat values.yaml | grep -A 2 persistence:

persistence:

enabled: false

labels:

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create/elasticsearch]

└─$

安裝elasticsearch

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create]

└─$helm install elasticsearch elasticsearch

NAME: elasticsearch

LAST DEPLOYED: Sat Feb 5 03:15:45 2022

NAMESPACE: kube-system

STATUS: deployed

REVISION: 1

NOTES:

1. Watch all cluster members come up.

$ kubectl get pods --namespace=kube-system -l app=elasticsearch-master -w

2. Test cluster health using Helm test.

$ helm test elasticsearch --cleanup

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create]

└─$kubectl get pods --namespace=kube-system -l app=elasticsearch-master -w

NAME READY STATUS RESTARTS AGE

elasticsearch-master-0 0/1 Running 0 41s

elasticsearch-master-1 0/1 Running 0 41s

等待一些时间,查看pod状态,elasticsearc集群安装成功

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create]

└─$kubectl get pods --namespace=kube-system -l app=elasticsearch-master

NAME READY STATUS RESTARTS AGE

elasticsearch-master-0 1/1 Running 0 2m23s

elasticsearch-master-1 1/1 Running 0 2m23s

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create]

└─$

metricbeat 安装

metricbeat的安装就需要注意资源文件的版本问题,直接安装会包如下错误

报错问题解决

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create/metricbeat]

└─$helm install metricbeat .

Error: unable to build kubernetes objects from release manifest: [unable to recognize "": no matches for kind "ClusterRole" in version "rbac.authorization.k8s.io/v1beta1", unable to recognize "": no matches for kind "ClusterRoleBinding" in version "rbac.authorization.k8s.io/v1beta1"]

解决办法,涉及到版本的直接修改

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create/metricbeat/charts/kube-state-metrics/templates]

└─$sed -i 's#rbac.authorization.k8s.io/v1beta1#rbac.authorization.k8s.io/v1#g' *.yaml

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create/metricbeat]

└─$helm install metricbeat .

NAME: metricbeat

LAST DEPLOYED: Sat Feb 5 03:42:48 2022

NAMESPACE: kube-system

STATUS: deployed

REVISION: 1

TEST SUITE: None

NOTES:

1. Watch all containers come up.

$ kubectl get pods --namespace=kube-system -l app=metricbeat-metricbeat -w

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create/metricbeat]

└─$kubectl get pods --namespace=kube-system -l app=metricbeat-metricbeat

NAME READY STATUS RESTARTS AGE

metricbeat-metricbeat-cvqsm 1/1 Running 0 65s

metricbeat-metricbeat-gfdqz 1/1 Running 0 65s

filebeat安装

安装filebeat

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create/filebeat]

└─$helm install filebeat .

NAME: filebeat

LAST DEPLOYED: Sat Feb 5 03:27:13 2022

NAMESPACE: kube-system

STATUS: deployed

REVISION: 1

TEST SUITE: None

NOTES:

1. Watch all containers come up.

$ kubectl get pods --namespace=kube-system -l app=filebeat-filebeat -w

过一会时间查看pod状态,安装成功

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create/filebeat]

└─$kubectl get pods --namespace=kube-system -l app=filebeat-filebeat -w

NAME READY STATUS RESTARTS AGE

filebeat-filebeat-df4s4 1/1 Running 0 20s

filebeat-filebeat-hw9xh 1/1 Running 0 21s

kibana 安装

修改SVC类型

这里需要注意的是,我么需要找集群外部访问,所以SVC需要修改为

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create/kibana]

└─$cat values.yaml | grep -A 2 "type: ClusterIP"

type: ClusterIP

loadBalancerIP: ""

port: 5601

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create/kibana]

└─$sed -i 's#type: ClusterIP#type: NodePort#g' values.yaml

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create/kibana]

└─$cat values.yaml | grep -A 3 service:

service:

type: NodePort

loadBalancerIP: ""

port: 5601

安装kibana

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create/kibana]

└─$helm install kibana .

NAME: kibana

LAST DEPLOYED: Sat Feb 5 03:47:07 2022

NAMESPACE: kube-system

STATUS: deployed

REVISION: 1

TEST SUITE: None

pending解决

一直创建不成功,我们查看事件,发现是CPU和内存不够,没办法,这里只能重新调整虚机资源

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create/kibana]

└─$kubectl get pods kibana-kibana-f88767f86-fsbhf

NAME READY STATUS RESTARTS AGE

kibana-kibana-f88767f86-fsbhf 0/1 Pending 0 6m14s

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create/kibana]

└─$kubectl describe pods kibana-kibana-f88767f86-fsbhf | grep -A 10 -i events

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Warning FailedScheduling 4s (x7 over 6m33s) default-scheduler 0/3 nodes are available: 1 node(s) had taint {node-role.kubernetes.io/master: }, that the pod didn't tolerate, 2 Insufficient cpu, 2 Insufficient memory.

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create/kibana]

└─$

调整完资源可能还会报这样的错,查了下,还是资源的问题,我的资源没办法调整了,后就休息了,睡起来发现可以了

Readiness probe failed: Error: Got HTTP code 000 but expected a 200

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create]

└─$kubectl describe pods kibana-kibana-f88767f86-qkrk7 | grep -i -A 10 event

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Scheduled 8m21s default-scheduler Successfully assigned kube-system/kibana-kibana-f88767f86-qkrk7 to vms83.liruilongs.github.io

Normal Pulled 7m42s kubelet Container image "docker.elastic.co/kibana/kibana:7.9.1" already present on machine

Normal Created 7m42s kubelet Created container kibana

Normal Started 7m41s kubelet Started container kibana

Warning Unhealthy 4m17s (x19 over 7m30s) kubelet Readiness probe failed: Error: Got HTTP code 000 but expected a 200

Warning Unhealthy 2m19s (x6 over 7m5s) kubelet Readiness probe failed:

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create]

└─$

下面是一些解决办法的参考,小伙伴有遇到可以看看

通过helm ls可以看到我们的EFK已经安装完成了

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create]

└─$helm ls

NAME NAMESPACE REVISION UPDATED STATUS CHART APP VERSION

elasticsearch kube-system 1 2022-02-05 03:15:45.827750596 +0800 CST deployed elasticsearch-7.9.1 7.9.1

filebeat kube-system 1 2022-02-05 03:27:13.473157636 +0800 CST deployed filebeat-7.9.1 7.9.1

kibana kube-system 1 2022-02-05 03:47:07.618651858 +0800 CST deployed kibana-7.9.1 7.9.1

metricbeat kube-system 1 2022-02-05 03:42:48.807772112 +0800 CST deployed metricbeat-7.9.1 7.9.1

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create]

└─$

pod列表查看所有的pod都Running了

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create/metricbeat]

└─$kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE

NOMINATED NODE READINESS GATES

calico-kube-controllers-78d6f96c7b-85rv9 1/1 Running 327 (172m ago) 51d 10.244.88.83 vms81.liruilongs.github.io

calico-node-6nfqv 1/1 Running 364 (172m ago) 54d 192.168.26.81 vms81.liruilongs.github.io

calico-node-fv458 1/1 Running 70 (172m ago) 54d 192.168.26.83 vms83.liruilongs.github.io

calico-node-h5lsq 1/1 Running 135 (3h25m ago) 54d 192.168.26.82 vms82.liruilongs.github.io

coredns-7f6cbbb7b8-ncd2s 1/1 Running 33 (3h ago) 51d 10.244.88.84 vms81.liruilongs.github.io

coredns-7f6cbbb7b8-pjnct 1/1 Running 30 (3h35m ago) 51d 10.244.88.82 vms81.liruilongs.github.io

elasticsearch-master-0 1/1 Running 2 (3h21m ago) 3h55m 10.244.70.40 vms83.liruilongs.github.io

elasticsearch-master-1 1/1 Running 2 (3h21m ago) 3h55m 10.244.171.163 vms82.liruilongs.github.io

etcd-vms81.liruilongs.github.io 1/1 Running 134 (6h58m ago) 54d 192.168.26.81 vms81.liruilongs.github.io

filebeat-filebeat-64f5b 1/1 Running 7 (168m ago) 3h54m 10.244.70.36 vms83.liruilongs.github.io

filebeat-filebeat-g4cw8 1/1 Running 8 (168m ago) 3h54m 10.244.171.185 vms82.liruilongs.github.io

kibana-kibana-f88767f86-vqnms 1/1 Running 8 (167m ago) 3h57m 10.244.171.159 vms82.liruilongs.github.io

kube-apiserver-vms81.liruilongs.github.io 1/1 Running 32 (179m ago) 20d 192.168.26.81 vms81.liruilongs.github.io

kube-controller-manager-vms81.liruilongs.github.io 1/1 Running 150 (170m ago) 52d 192.168.26.81 vms81.liruilongs.github.io

kube-proxy-scs6x 1/1 Running 22 (3h21m ago) 54d 192.168.26.82 vms82.liruilongs.github.io

kube-proxy-tbwz5 1/1 Running 30 (6h58m ago) 54d 192.168.26.81 vms81.liruilongs.github.io

kube-proxy-xccmp 1/1 Running 11 (3h21m ago) 54d 192.168.26.83 vms83.liruilongs.github.io

kube-scheduler-vms81.liruilongs.github.io 1/1 Running 302 (170m ago) 54d 192.168.26.81 vms81.liruilongs.github.io

metricbeat-kube-state-metrics-75c5fc65d9-86fvh 1/1 Running 0 13m 10.244.70.43 vms83.liruilongs.github.io

metricbeat-metricbeat-895qz 1/1 Running 0 13m 10.244.171.172 vms82.liruilongs.github.io

metricbeat-metricbeat-metrics-7c5cd7d77f-22fgr 1/1 Running 0 13m 10.244.70.45 vms83.liruilongs.github.io

metricbeat-metricbeat-n2gx2 1/1 Running 0 13m 10.244.70.46 vms83.liruilongs.github.io

metrics-server-bcfb98c76-d58bg 1/1 Running 3 (3h13m ago) 6h28m 10.244.70.31 vms83.liruilongs.github.io

查看节点核心指标

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create/metricbeat]

└─$kubectl top nodes

NAME CPU(cores) CPU% MEMORY(bytes) MEMORY%

vms81.liruilongs.github.io 335m 16% 1443Mi 46%

vms82.liruilongs.github.io 372m 12% 2727Mi 57%

vms83.liruilongs.github.io 554m 13% 2513Mi 56%

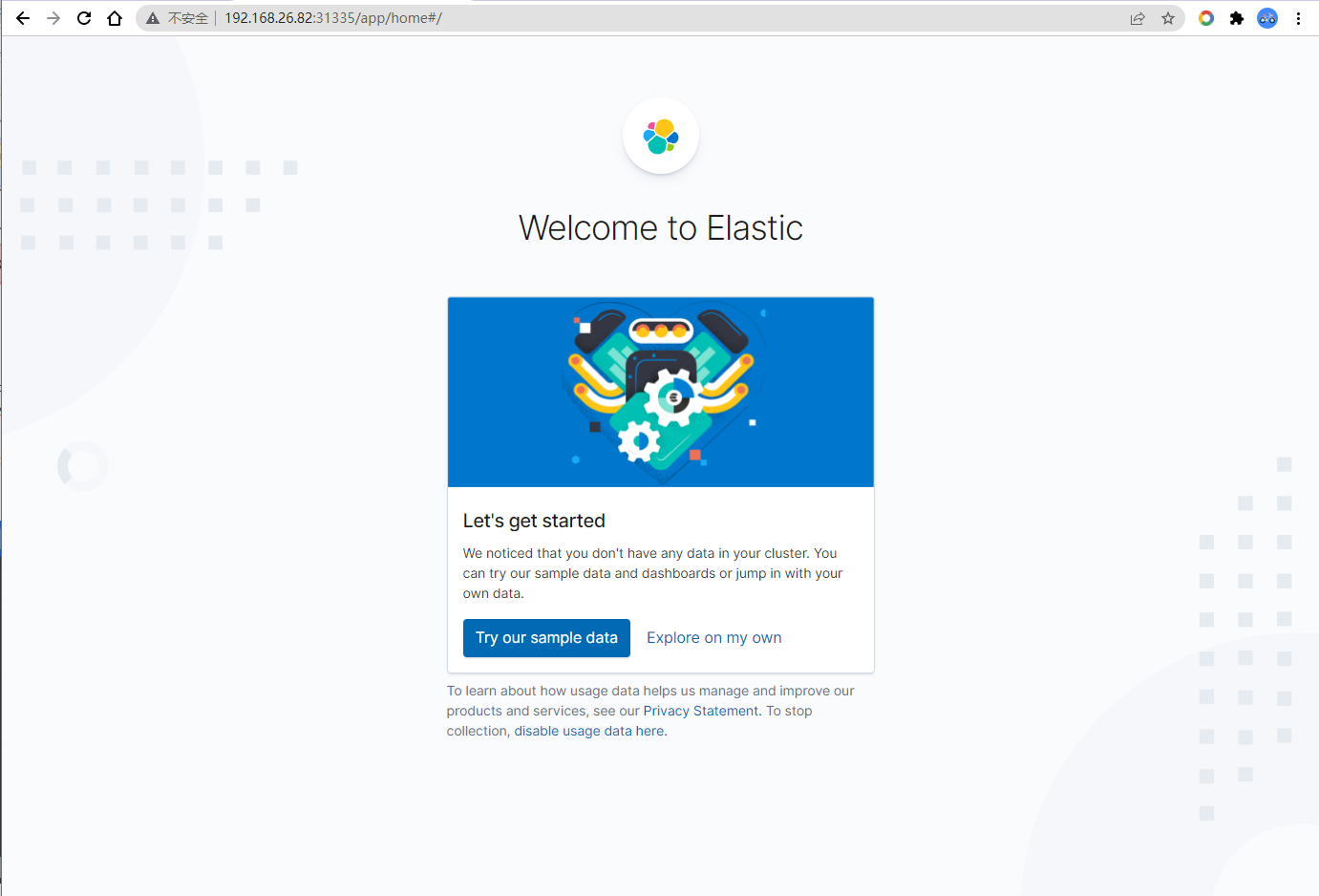

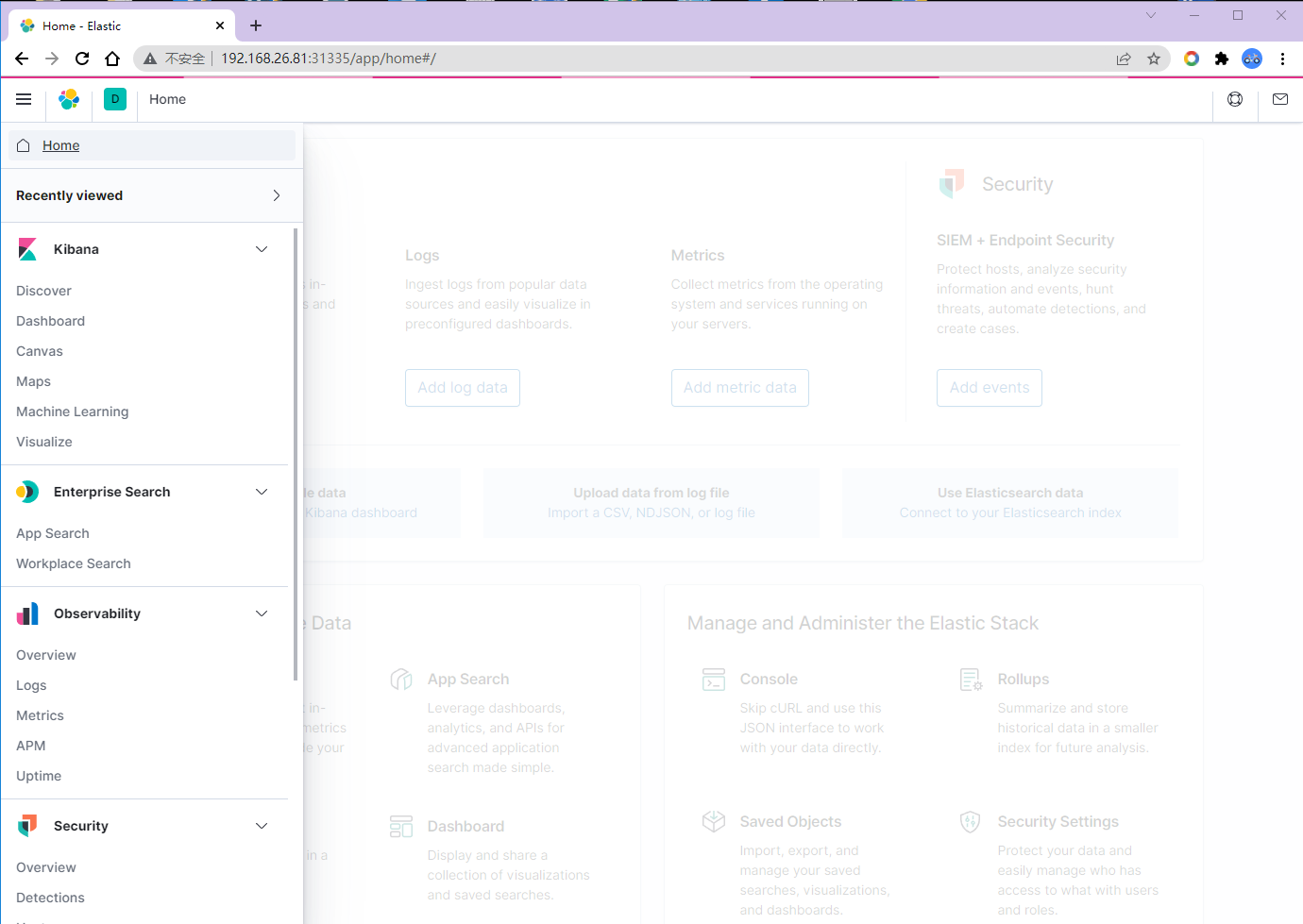

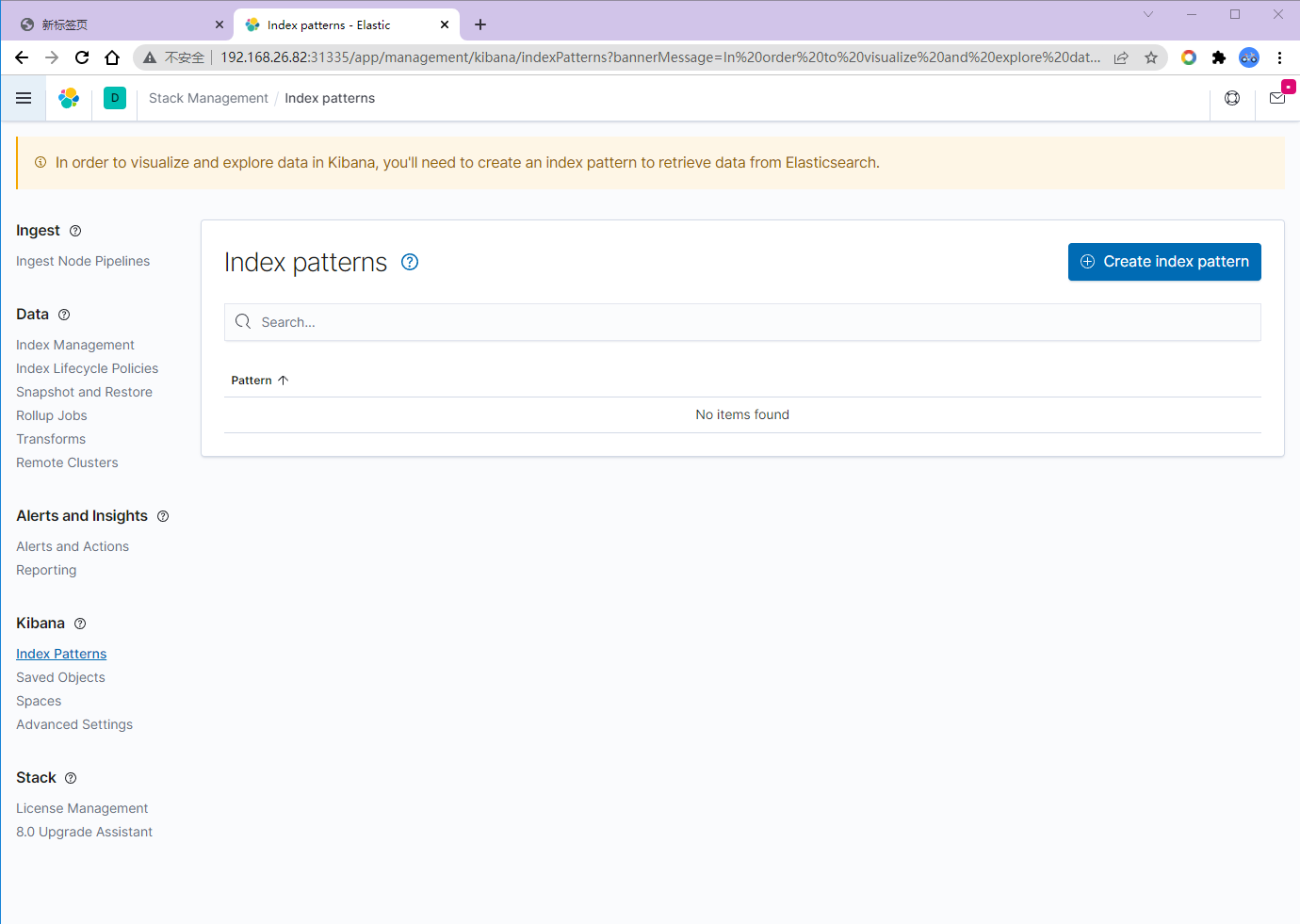

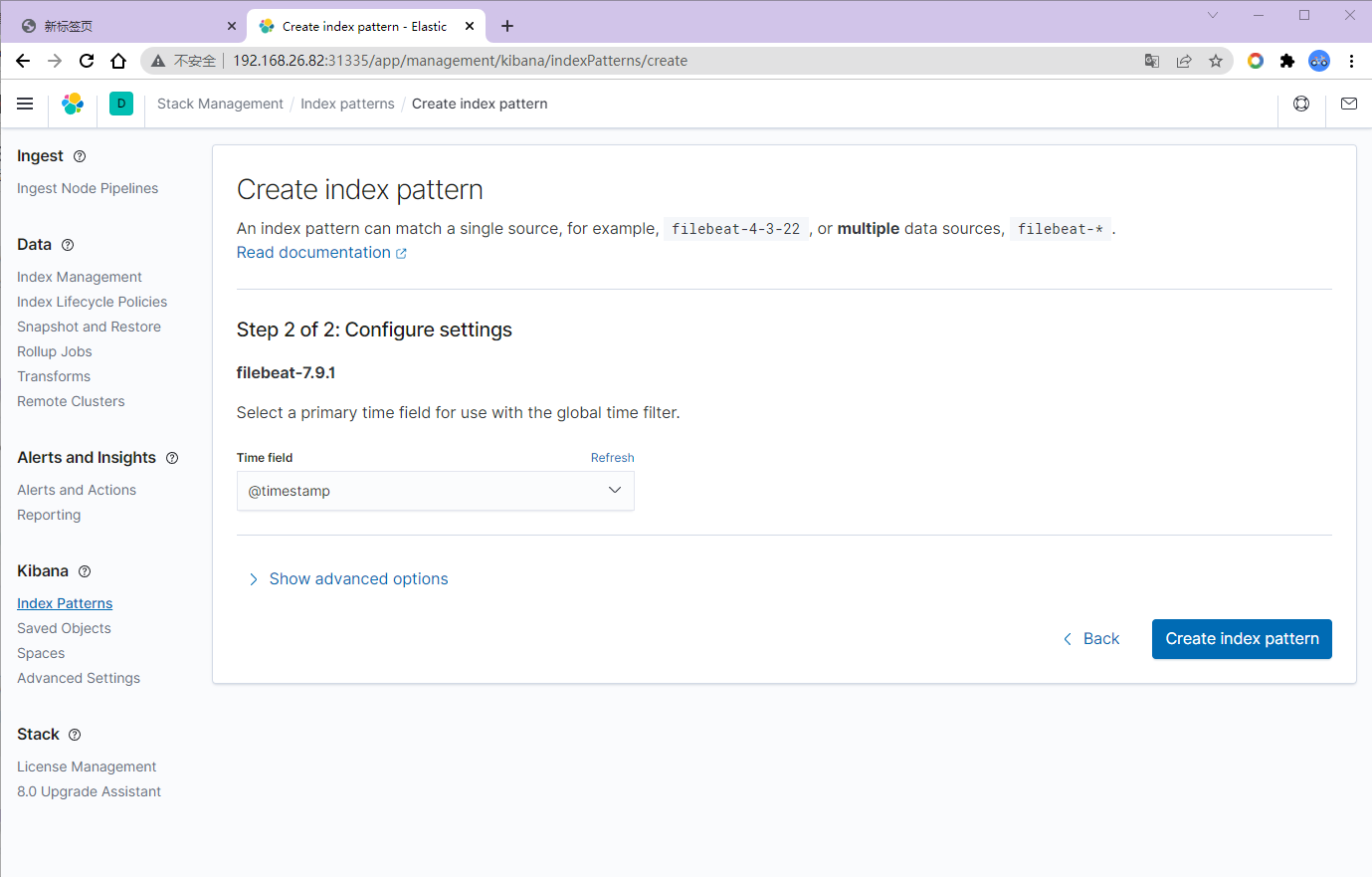

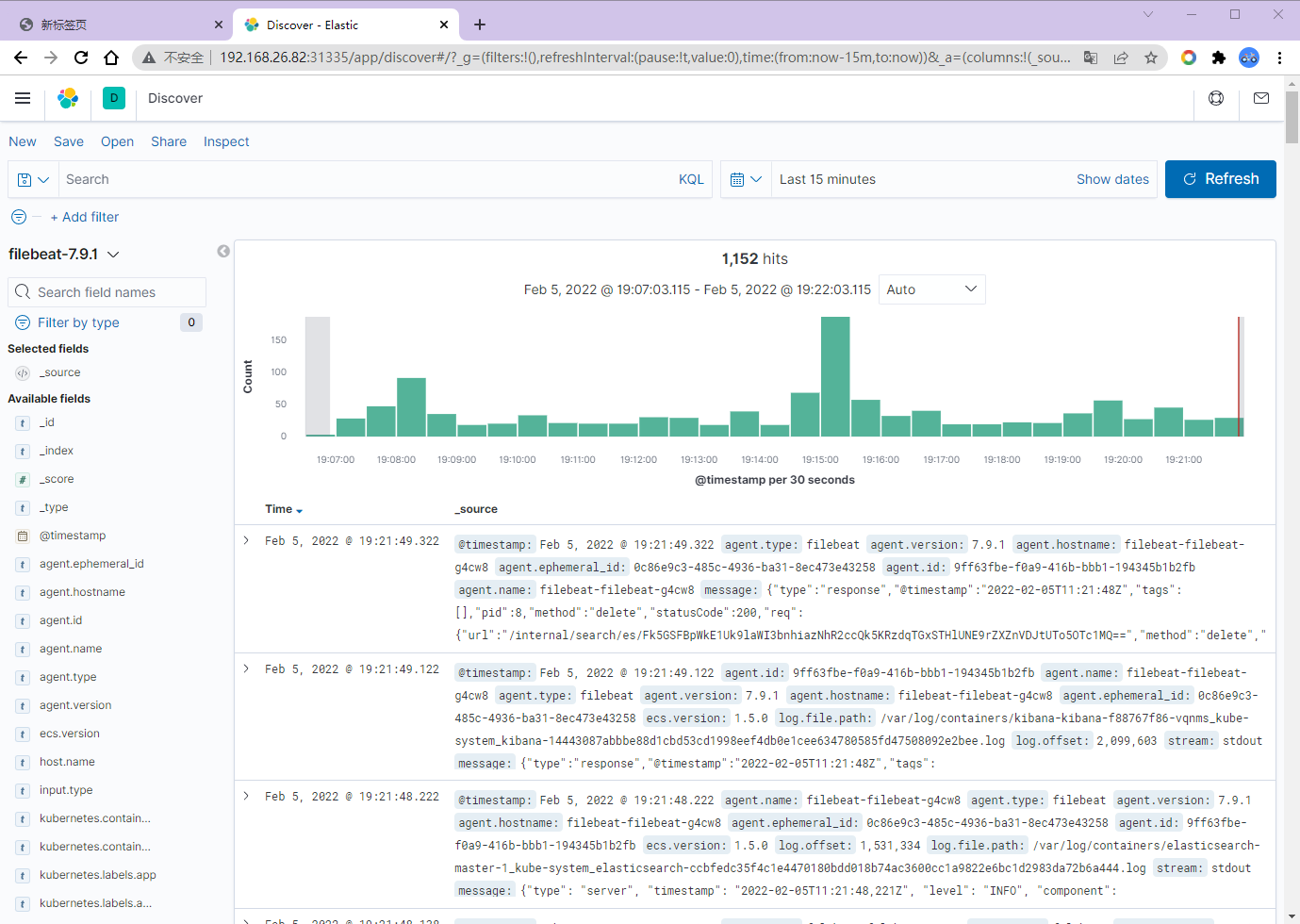

EFK测试

查看kibana对应的端口,访问测试

┌──[root@vms81.liruilongs.github.io]-[~/ansible/k8s-helm-create/metricbeat]

└─$kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

elasticsearch-master ClusterIP 10.96.232.233 9200/TCP,9300/TCP 3h44m

elasticsearch-master-headless ClusterIP None 9200/TCP,9300/TCP 3h44m

kibana-kibana NodePort 10.100.170.124 5601:31335/TCP 3h46m

kube-dns ClusterIP 10.96.0.10 53/UDP,53/TCP,9153/TCP 54d

liruilong-kube-prometheus-kubelet ClusterIP None 10250/TCP,10255/TCP,4194/TCP 20d

metricbeat-kube-state-metrics ClusterIP 10.108.35.197 8080/TCP 115s

metrics-server ClusterIP 10.111.104.173 443/TCP 54d

nginxdep NodePort 10.106.217.50 8888:31964/TCP 2d20h